Docker is a software platform that’s used for building applications in small, lightweight execution environments called containers. Containers are isolated from other processes, operating system resources and kernels. They are assigned resources that no other process can access, and they cannot access any resources not explicitly assigned to them. The concept of containerization has been around for some time, but practical applications were few and far between. Then came, Docker, an open source project launched in 2013, that helped to popularize the technology. Originally built for Linux OS, Docker became a multi-platform solution, broadening its applicability and catalyzing the microservices-based approach in development which has been widely embraced over the past several years.

Different than Virtualization

Docker containers are similar to virtualized machines in some ways, including their resource isolation and allocation, and their ability to be copied to any location on any operating system without losing their functionality.

Yet Docker containers are different than virtual machines in several ways.

First, Docker is a tool intended for data processing and is not meant to be used for data storage (for data storage you will have to use mounted volumes). Every Docker container has a top read/write layer, and once a container is terminated - both the layer and its content are deleted. Also, Docker Images use a different approach to virtualization and contain a minimal set of everything needed to run an application. That includes: code, runtime, system tools, system libraries and settings. Another difference is that since containers don’t have a common OS, they take up less space than VMs and boot much faster.

Images and Containers

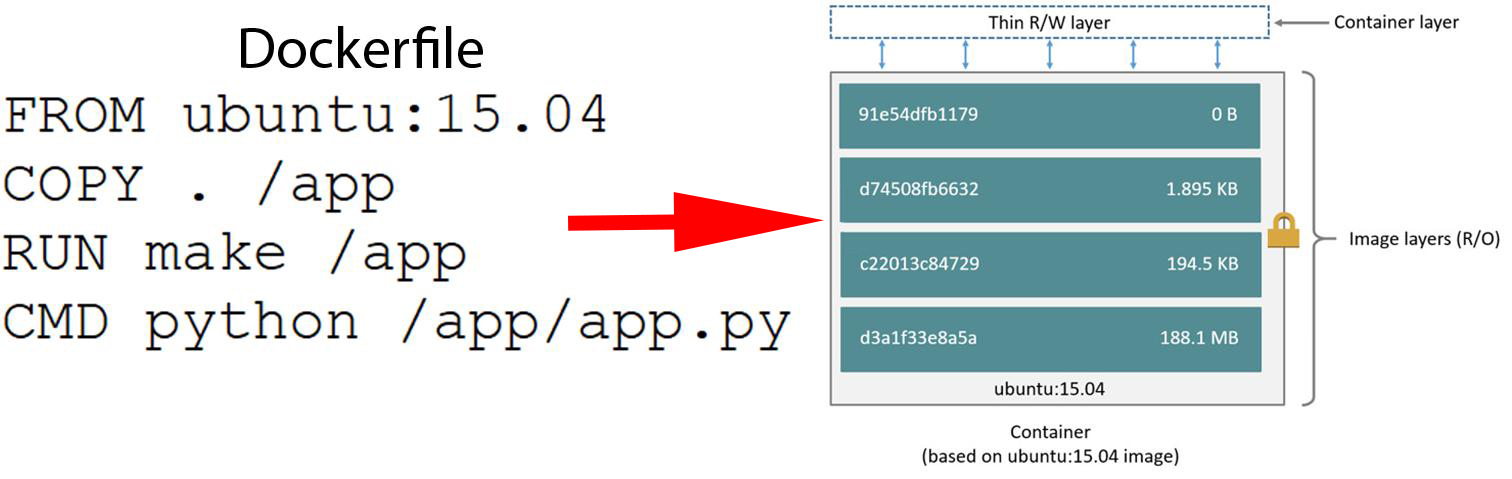

(Source: docs.docker.com)

A Docker Image is a set of stacked layers, each of which represents an instruction from the Dockerfile. A Dockerfile is a text document that contains all the commands users can call in the command line interface to assemble an image. This lets users create automated builds that execute several command-line instructions in succession.

When the command docker images is run, the user gets a list of the names and properties of all locally present Images.

REPOSITORY TAG IMAGE ID CREATED SIZE

ubuntu latest 3556258649b2 10 days ago 64.2MB

The key fields of the list include the following:

Repository - This is the name of the repository where you pulled the Image from. If you plan to push the Image, this name must be unique. Otherwise, you can store the Image locally and name it however you want.

Tag - Tags are used to convey information about the specific version of the Image. It is an alias for the Image ID. If no tag is specified, then Docker automatically uses the latest tag as a default.

ImageID- This is a locally generated and assigned Image identification.

A Docker Container is a running instance of the Docker Image. When a user creates a new Docker Container, he or she adds a new writable layer on top of the underlying layers. This layer is often referred to as the container layer. All changes made to the running container, such as writing new files and modifying or deleting existing ones, are added to this thin writable container layer. Multiple containers can be run out of one Image.

To list running containers, use the docker ps. To generate a list of all containers (including terminated ones), use the docker ps -a command.

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

626b43558b2 ubuntu "/bin/bash" 6 minutes ago Up 10 seconds xenodochial_leakey

In the output above, the most important fields for a Container are:

Container ID - is a unique, locally assigned identifier for the Docker container.

Names - human-readable name for the Docker Container.

Running Containers

A container is a process that runs on a local or remote host.

To run a container use: docker run. With the run command a developer can specify or override image defaults related to:

- Detached

-dor foreground running - Container identification

--name - Network settings

- Runtime constraints on CPU and memory

Stopped containers can be restarted with the docker start command.

Processes

Now, let’s look inside the Docker Container. Directories in the Container are organized by the File Hierarchy Standard, which defines the directory structure and directory contents in Linux distributions. Containers only exist while the main process inside is running. In the Docker container, the main process always has the PID=1. You can start as many secondary processes as your container can handle, but as soon as you stop the main process it will terminate the entire container.

Registry

The place to store and exchange Images is called Docker Registry. The most popular registry is the Docker Hub. Before you Docker any service, it’s a good idea to visit the Hub first and see if it already exists.

Registry is a centralized platform for storage and exchange of Images for developers and computers with a single command. Note that Registry does not store running Containers, but only the Docker Images.

To download an Image from the Hub, use: docker pull [image name]

To upload an Image to the Hub, use: docker push [image name]

In addition, the Docker Hub includes a valuable feature for learners, It’s a presentation of the Dockerfile along with an explanation of how developers can use it to learn how to create their own Images.

Dockerfile

Before attempting to write a Dockerfile, a sensible prerequisite is to learn the basic instructions. Usually, a Dockerfile consists of lines of code like these:

## Comment

INSTRUCTION arguments

The syntax is not case-sensitive, so it’s not mandatory, but converting the instructions into upper-case text makes it more easy to distinguish those elements from the arguments. Here is a Dockerfile example:

FROM ubuntu:18.04

COPY . /app

RUN make /app

CMD python /app/app.py

FROM

FROM - This is the only mandatory instruction. It initializes a new build and sets the Base Image for following instructions. The specified Docker Image will be pulled from the Docker Hub registry if it does not exist locally.

Example:

FROM debian:cosmic - pulls and sets the debian image with the tag cosmic

COPY

As its name indicates, the COPY instruction copies files and directories from the base OS and adds them to the filesystem of the container.

Example:

COPY /project /usr/share/project

Note that the absolute path refers to an absolute path within the build context, not an absolute path on the host. If COPY /users/home/student/project /usr/share/project is used then the dockerfile will produce an error that /users/home/student/project does not exist because there is no subdirectory users/home/student/project.

RUN

The RUN instruction executes any commands in a new layer on top of the current image.

CMD

The main purpose of CMD is to provide default values for an executing container. There can only be one CMD instruction in a Dockerfile. If you list more than one CMD then the last CMD will take effect.

For those wanting to learn more about instructions, more information is available in Docker Docs.

Docker Networking

Docker is an excellent tool for managing and deploying microservices. Microservice architecture is increasingly popular for building large-scale applications. Rather than using a single codebase, applications are broken down into a collection of smaller microservices. One of the reasons Docker containers and services are so powerful is that you can connect them together.

Docker’s networking subsystem is pluggable, using drivers. Several drivers exist by default, and provide core networking functionality. These include:

Bridge: The default network driver, it is usually used when applications running in standalone containers need to communicate.

Host: The host network mode is for standalone containers. This removes the network isolation between the container and the Docker host, enabling use of the host’s networking directly.

Overlay: The overlay network driver connects multiple Docker daemons and enables swarm services to communicate with one another.

Macvlan: The macvlan driver allows developers to assign a MAC address to a container, making it appear as a physical device on a network.

none: The none flag disables all networking for the specified container.

Create networks

To create the network, a developer need not use the --driver bridge flag since it’s the default, but this example shows how to specify it. When you create a network, Docker creates a non-overlapping subnetwork for the network by default. You can override this default and specify subnetwork values directly using the --subnet option, additionally, you also specify the --gateway. On a bridge network you can only create a single subnet.

$ docker network create --driver bridge --subnet [subnet] --gateway [gateway] [network name]

To connect containers to a network, developers need to use the --network flags. On a user-defined networks, developers can also choose the IP addresses for the containers with --ip and --ip6 flags when they are started. A container’s ports can also be bound to a specific port using the -p flag. Only one network connection is allowed when using the Docker run command. So, if they need to connect to other networks, developers can do that subsequently using the Docker network connect command. Here’s an example:

$ docker run -dit --name [container name] --network [network name] --ip [ip] -p [host:docker] [image name]

$ docker network connect [network name] [container name]

Conclusion

With Docker, it is really easy and straightforward to install, test and run software on almost any computer, such as a desktop, a web server or a cloud-based platform. Another stand-out characteristic of Docker is how it handles the development of a single application using a suite of microservices. Those are the increasingly popular - small services, each of which runs their own process and can communicate with other such services using lightweight mechanisms. So, if you are developing multiple applications and want them to interconnect, then Docker is a good option for you to consider. And the repository of free public Docker images and comprehensive documentation will make it easier for beginners.